The tutorial has been updated to work with ARCore version 1.12.0 so now we’re able to determine whether the image is currently being tracked by the camera to pause/resume the video accordingly.

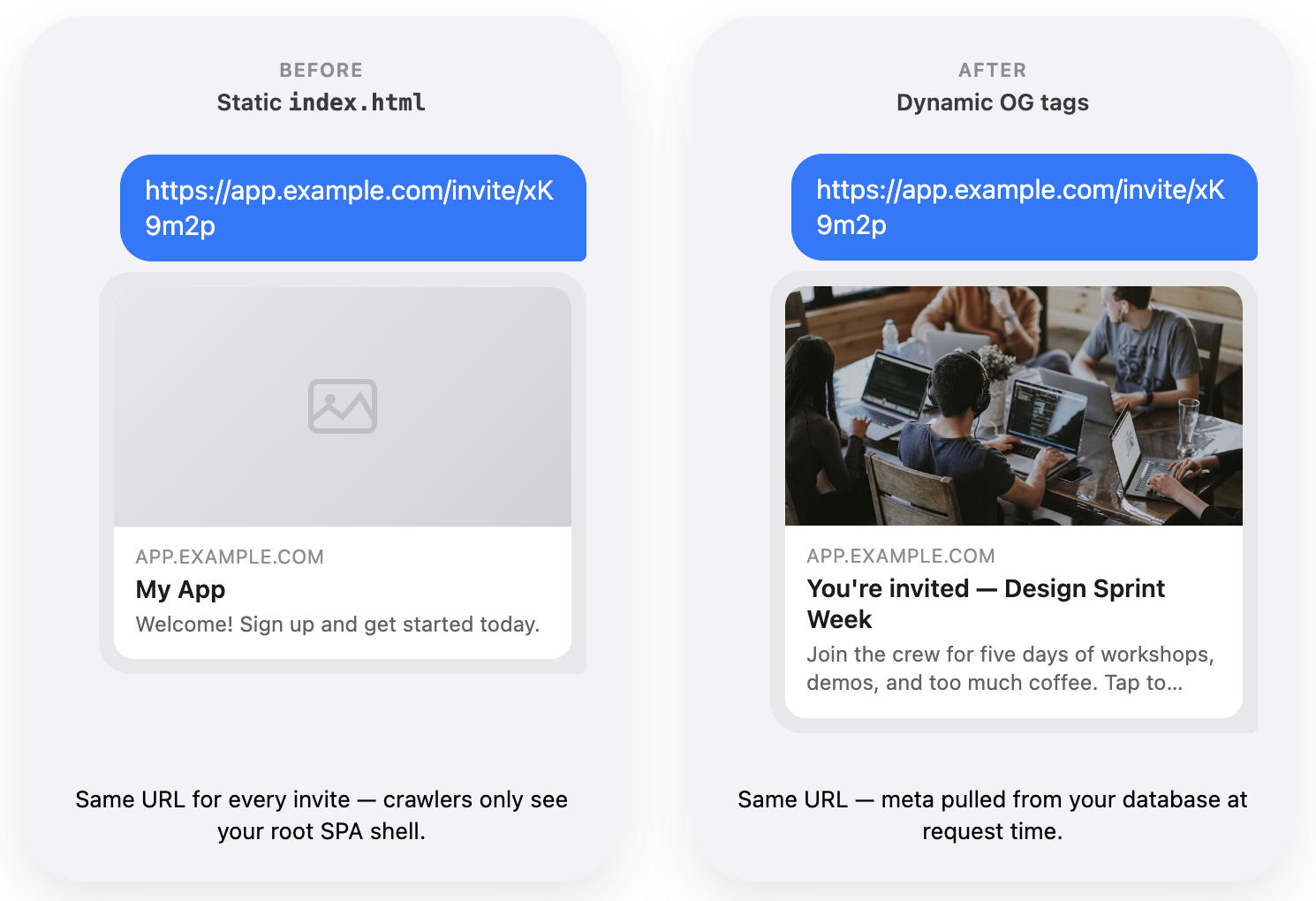

This is the third and final part of the series where I cover the combination of ARCore and Sceneform. More specifically, I’m showing you how to play a video, using the Sceneform, on top of an image that can be detected with the help of ARCore Augmented Images capabilities.

.gif)

At the end of the previous post, we have ended up with the working demo of the above-described concept. Still, there is room for improvement.

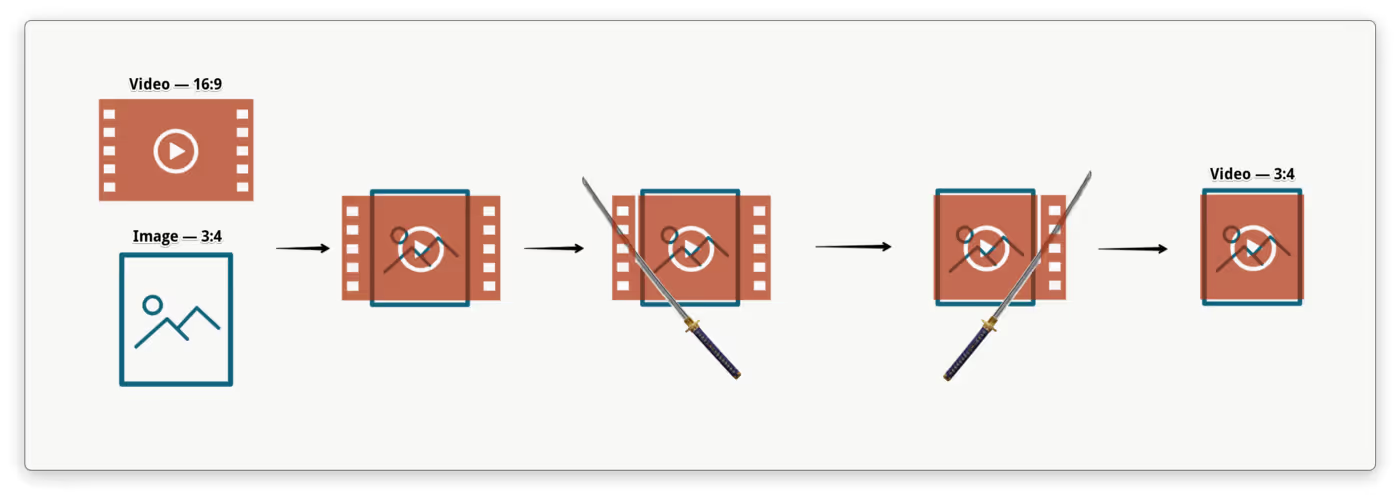

One thing we can add is the ability to remove unwanted outer areas of a video which exceed the detected image. Please refer to the illustration below so we are on the same page.

Another thing that can actually improve the user experience is a fade-in effect when a video appears on top of the image.

TL;DR Complete source code is available at this repository.

Material

Both improvements can be done mostly inside the custom video material (i.e. augmented_video_material.mat file). But before we can modify the shader code, we want to add several new material parameters and change the material blending mode.

Let’s start with the new parameters, there are four of them, namely:

- imageSize and videoSize of type float2 — to calculate a difference between image and video aspect ratios, so it’s possible to define an excess outer area

- videoCropEnabled of type bool — to enable/disable the crop logic

- videoAlpha of type float — to control video overall transparency

As for the blending mode, let’s change it from opaque to transparent, otherwise, the video texture will not look transparent when the alpha channel is modified.

See the code below with all the changes applied:

Crop

Oh boy, it looks like, developing crop features on Android is my thing. Back in the days, when I was working at Yalantis, I’ve created uCrop library — it was one special kind of fun adventure into bitmaps decoding, matrix calculations, and native libraries. And look at me now, cropping videos in Augmented Reality

🤷

I assume there are multiple ways to “crop” (i.e. hide outer areas) a video that is rendered on the ExternalTexture. However, one that I demonstrate below is not just perfectly working but also the only one I came up with and managed to implement on my own.

My idea of cropping video via shader code is kinda trivial — we just have to make the area we want to crop fully transparent. Piece of cake, right?

Let’s modify the shader code to execute the cropVideo() function if the videoCropEnabled parameter equals true.

Notice that when cropVideo() function returns true there is a return statement. That’s because we don’t need to execute the rest of the material()function for this particular UV coordinates (i.e. set the material color) — it’s going to be absolutely transparent anyway.

Next, let’s define the body of cropVideo() function. The logic goes like this:

- get the image and video size values from the material parameters

- depending on aspect ratio difference, work with either horizontal or vertical excess areas

- calculate a difference between image and video aspect ratios — that is the float2 excessArea variable

- for all UV coordinates that belong to the excess outer area multiply the material color channels by 0.0 and return true; otherwise, return false.

Finally, we need to modify the playbackArVideo() function to set above-defined material parameters right after setup of the video Node.

As you can see, Material class has several methods (e.g. setFloat2(), setBoolean(), etc.) to modify material parameters in the runtime, which in turn can be used within the shader code.

Fade in

In order to fade in the video texture, we’ll use the same trick as we used for crop — modifying alpha.

Shader code modification is trivial, all we need to do is to add one line at the end of the material() function:

When videoAlpha value is equal to 0.0 — video is fully transparent; when the value is equal to 1.0 — video is fully opaque. It’s easy to figure out the rest of fade in logic. We’ll just use ValueAnimator to interpolate videoAlpha value from zero to hero. The start of the animation is the perfect place to set the video renderable.

That should do it. Now let’s build the sources and give it a try!

Video center crop & fade-in demo 👇

All right, finally we’ve got exactly the same look and feel as on the GIF at the start of the post.

Let’s recap — during the series we have learned how to draw a video stream to a texture in augmented reality. We’ve also added some code to support video rotation metadata and several different scale types. Finally, we modified the shader code to use the alpha channel in order to crop and fade the video. I think you will agree that it wasn’t that hard from the technical point of view. Most of the non-trivial code is all those equations for different aspect ratios and scale types — not the actual ARCore or Sceneform APIs.

Nevertheless, I hope someone will find it helpful. And I’d love to read your comments, especially if you know a better way to accomplish this task.

P.S. The quality and stability of the Augmented Images feature was definitely a PITA. One can casually read through the ARCore GitHub issues to feel developers' pain. On the other hand, working with Sceneform (which has Filament under the hood) was extremely satisfying — for that I tip my hat to Romain Guy.

I’d guess that most of the developers who had a chance to work with the ARCore, including me, would be extremely happy to see this framework evolve and improve. It’s so awesome that we have this kind of technology in our hands nowadays and I do appreciate the ARCore team for the work they have done. Hopefully, Google’s AR ambitions won’t stop on Childish Gambino AR stickers and that sort of stuff ;)

P.P.S. Since the introduction of the Augmented Images feature, it has been greatly improved. At the moment of writing, it is version 1.12.0 and some of the major issues have been resolved. I am sure it will be much better in the future. Go team ARCore 💪